A handy summary prepared by Jesse Sigal. Thanks, Jesse!

Advice

- Determine what is relevant for you to actually benchmark (areas include accuracy, computational complexity, speed, memory usage, average/best/worst case, power usage, degree of achievable parallelism, probability of failure, clock time, performance vs time for anytime algorithms).

- Make sure you run on appropriate data, including generating random (but representable) data and running statistical analysis.

- Consider using multiple datasets and cross-validation.

- Consider the extreme cases as well.- Find benchmarks the field will care about.

Books

- “Writing for Computer Science” by Justin Zobel

- “The art of computer systems performance analysis” (1990) by Raj Jain

Papers

- A. Crapé and L. Eeckhout, “A Rigorous Benchmarking and Performance Analysis Methodology for Python Workloads,” 2020 IEEE International Symposium on Workload Characterization (IISWC), Beijing, China, 2020, pp. 83-93, doi: 10.1109/IISWC50251.2020.00017.

- A. Georges, D. Buytaert, L. Eechkout, “Statistically rigorous java performance evaluation,” OOPSLA '07: Proceedings of the 22nd annual ACM SIGPLAN conference on Object-oriented programming systems, languages and applications, October 2007 Pages https://doi.org/10.1145/1297027.1297033

- Benchmarking Crimes: An Emerging Threat in Systems Security. van der Kouwe, E.; Andriesse, D.; Bos, H.; Giuffrida, C.; and Heiser, G. Technical Report arXiv preprint arXiv:1801.02381, January 2018.

- Hoefler, Torsten, and Roberto Belli. "Scientific benchmarking of parallel computing systems: twelve ways to tell the masses when reporting performance results." Proceedings of the international conference for high performance computing, networking, storage and analysis. 2015.

- Hunold, Sascha, and Alexandra Carpen-Amarie. "Reproducible MPI benchmarking is still not as easy as you think." IEEE Transactions on Parallel and Distributed Systems 27.12 (2016): 3617-3630.

Online resources

- http://gernot-heiser.org/benchmarking-crimes.html

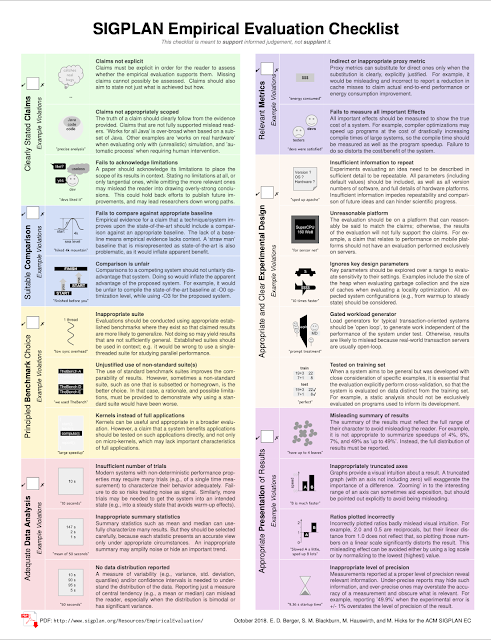

- https://www.sigplan.org/Resources/EmpiricalEvaluation/

- https://www.acm.org/publications/policies/artifact-review-and-badging-current

No comments:

Post a Comment